What are the risks of ChatGPT and large language models (LLMs)? And what should you do about them?

Over the past few months, we’ve all heard a lot about ChatGPT and how AI technologies like it will revolutionise the way we work and live. But what risks exist behind the hype? And what should organisations be doing to mitigate those risks?

When Microsoft announced the third phase of its long-term partnership with OpenAI in March 2023, it outlined for the multiyear, multibillion dollar investment to accelerate AI breakthroughs with the goal of ensuring these benefits are broadly shared with the world.

Since 2016, Microsoft has committed to building Azure into an AI supercomputer for the world, announcing its first top-5 supercomputer in 2020, and subsequently constructing multiple AI supercomputing systems at massive scale. OpenAI has used this infrastructure to train its breakthrough models, which are now deployed in Azure to power category-defining AI products like GitHub Copilot, DALL·E 2 and ChatGPT.

However, the launch of the new ChatGPT-powered Bing chatbot saw some highly publicised and creepy conversations being held with users. Bing also served up some incorrect answers at the launch.

Yet the attention around the launch was so fervid, it was swiftly followed by an announcement by Google of the rollout of its rival Bard AI service – the hastened nature of which led some to describe it as “rushed” and “botched.” Bigger concerns are also being raised. Turing Prize winner Dr. Geoffrey Hinton – known as the "Godfather of AI" – has walked away from Google and can now be heard desperately ringing AI alarm bells.

The press attention has been massive, but it is difficult for the average organisation to determine where the truth lies. Is the development of these technologies a worrying development with not nearly enough oversight, governance or understanding of the risks? Or should we be jumping on the bandwagon to ensure our organisations are at the forefront of sharing the benefits of these AI breakthroughs with the world?

What are the risks associated with LLMs?

The UK’s National Cyber Security Centre (NCSC) issued advice for all organisations in order to help them understand the risks and decide for themselves (as far as is possible).

The NCSC explains, “An LLM is where an algorithm has been trained on a large amount of text-based data, typically scraped from the open internet, and so covers web pages and – depending on the LLM – other sources such as scientific research, books or social media posts. This covers such a large volume of data that it’s not possible to filter all offensive or inaccurate content at ingest, and so 'controversial' content is likely to be included in its model.”

It lists the following LLM risks:

• they can get things wrong and “hallucinate” incorrect facts.

• they can be biased, are often gullible (in responding to leading questions, for example).

• they require huge compute resources and vast data to train from scratch.

• they can be coaxed into creating toxic content and are prone to injection attacks.

Most worryingly, it’s not always clear when the AI has been compromised like this – and an explanation for why it behaves as it does is not always evident either.

When its AI model developed a skill it wasn’t supposed to have, Google CEO Sundar Pichai explained, “There is an aspect of this which we call … a ‘black box’. You don’t fully understand. And you can’t quite tell why it said this.” When interviewer CBS’s Scott Pelley then questioned the reasoning for opening to the public a system that its own developers don’t fully understand, Pichai responded, “I don’t think we fully understand how a human mind works either.”

This glib justification highlights another risk associated with LLMs: that in the rush to lead the field, we allow the guardrails to fly off. That’s why it’s more important than ever for governments, regulators, organisations and individuals to educate themselves about these technologies and the risk associated with them.

Risk #1: Misuse

Dr. Geoffrey Hinton has expressed grave concerns about the rapid expansion of AI, saying "it is hard to see how you can prevent the bad actors from using it for bad things.”

Indeed, Alberto Domingo, Technical Director of Cyberspace at NATO Allied Command Transformation, said recently, “AI is a critical threat. The number of attacks is increasing exponentially all the time.”

The NCSC warns that the concern specifically around LLMs is that they might help someone with malicious intent (but insufficient skills) to create tools they would not otherwise be able to deploy. Furthermore, criminals could use LLMs to help with cyberattacks beyond their current capabilities – for example, once an attacker has accessed a network if they are struggling to escalate privileges or find data, they might ask an LLM and receive an answer that's not unlike a search engine result, but with more context.

Risk #2: Misinformation

We looked at the problems of bias in AI tools in a previous blog – and there are certainly wide-ranging and valid concerns about this. The NCSC warns, “Even when we trust the data provenance, it can be difficult to protect whether the features, intricacies and biases in the dataset could affect your model’s behaviour in a way in which you hadn’t considered.”

Business leaders need to be careful to ensure that they are aware of the limitations of the datasets used to train their AI models, work to mitigate any issues and ensure that their AI solutions are deployed and used responsibly.

Risk #3: Misinterpretation

Beyond the problem of bias, we have the problem of AI “hallucinations”. This strange term refers to the even stranger phenomenon of the output of the AI being entirely inexplicable based on the data on which it has been trained.

There have been some startling examples of this. This includes the “Crungus” – a snarling, naked, ogre-like figure conjured by the AI-powered image-creation tool Dall-E mini. The Guardian explained, that it “exists, in this case, within the underexplored terrain of the AI’s imagination. And this is about as clear an answer as we can get at this point, due to our limited understanding of how the system works. We can’t peer inside its decision-making processes because the way these neural networks ‘think’ is inherently inhuman. It is the product of an incredibly complex, mathematical ordering of the world, as opposed to the historical, emotional way in which humans order their thinking.

The Crungus is a dream emerging from the AI’s model of the world, composited from billions of references that have escaped their origins and coalesced into a mythological figure untethered from human experience. Which is fine, even amazing – but it does make one ask, whose dreams are being drawn upon here? What composite of human culture, what perspective on it, produced this nightmare?”

In another recent example, Chat GPT was asked about including non-human creatures in political decision-making. It recommended four books – only one of which actually existed. Further, its arguments drew on concepts lifted from right-wing propaganda. Why did this failure happen? Having been trained by reading most of the Internet, argues the Guardian, ChatGPT is inherently stupid. It’s a clear example of the old IT and data scientist adage: garbage in, garbage out.

The implications for the widespread use of LLM answers in our decision-making (especially without human oversight) is especially frightening.

Risk #4: Information security

The way to get better answers out is, of course, to put better quality data in. But this raises further concerns – most particularly in the area of information security.

The Guardian reported on the case of a digital artist based in San Francisco using a tool called “Have I been trained” to see if their work was being used to train AI image generation models. The artist was shown an image of her own face, taken as part of clinical documentation when she was undergoing treatment. She commented, “It’s the digital equivalent of receiving stolen property. Someone stole the image from my deceased doctor’s files and it ended up somewhere online, and then it was scraped into this dataset. It’s bad enough to have a photo leaked, but now it’s part of a product. And this goes for anyone’s photos, medical record or not. And the future abuse potential is really high.”

This type of experience has led the NCSC to warn that “In some cases, training data can be inferred or reconstructed through simple queries to a deployed model. If a model was trained on sensitive data, then this data may be leaked. Not good.”

There’s also an issue about data privacy and information security when it comes to using the LLMs. Although the NCSC recognises that “an LLM does not (as of writing) automatically add information from queries to its model for others to query” so that “including information in a query will not result in that data being incorporated into the LLM”, “the query will be visible to the organisation providing the LLM (so in the case of ChatGPT, to OpenAI), so those queries are stored and will almost certainly be used for developing the LLM service or model at some point.”

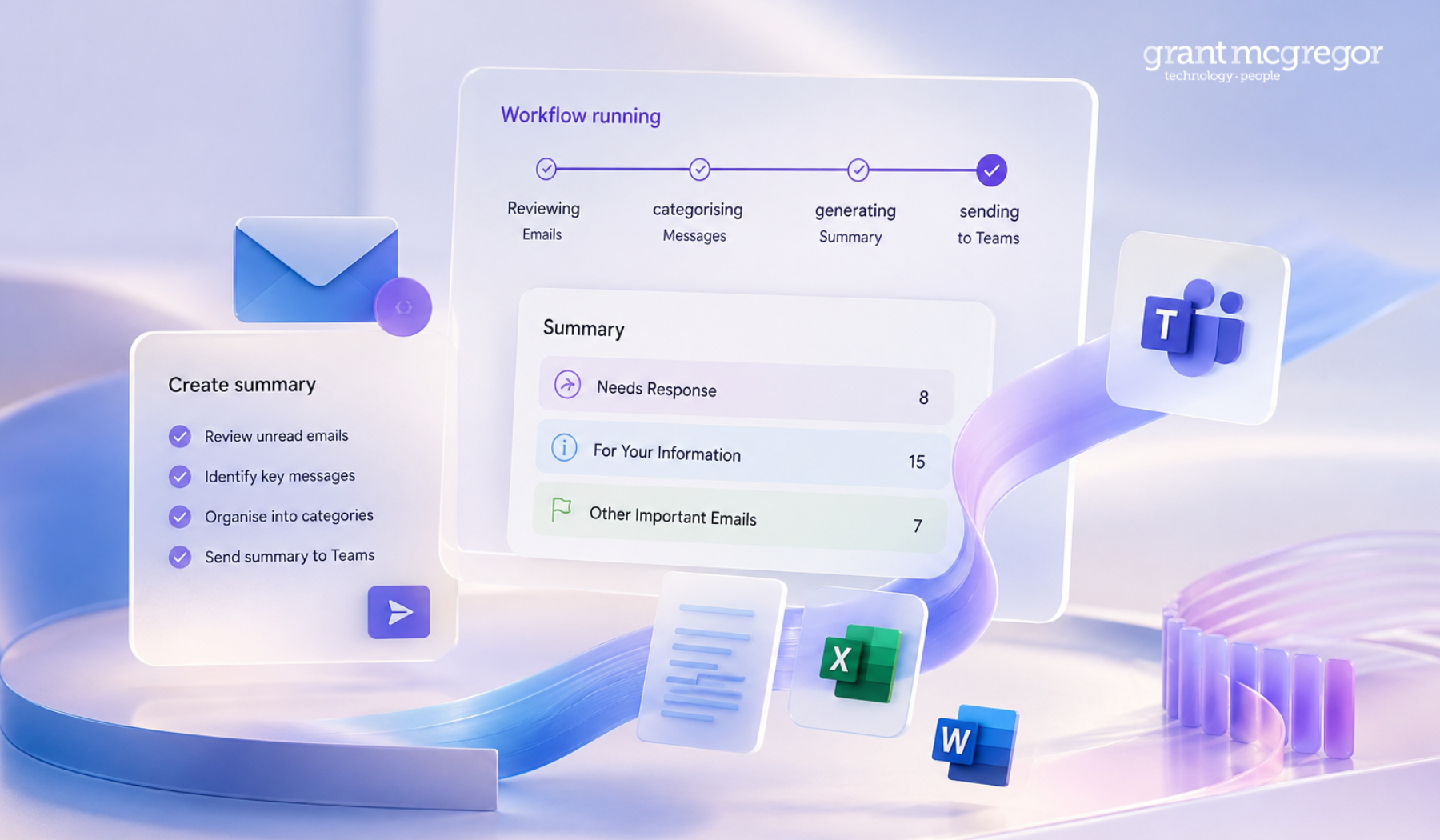

It's one of the reasons that Microsoft has been at pains to point out that while its ChatGPT-based automation Microsoft Copilot can help to discover, organise and present information held across your Microsoft 365 tenancy, Copilot is not trained on data in your tenant.

As a result, the NCSC recommends:

• not to include sensitive information in queries to public LLMs.

• not to submit queries to public LLMs that would lead to issues were they made public.

It acknowledges that organisations must be able to allow their staff to experiment with LLMs if they wish to but emphasises that this must be done in a way that does not put organisational data at risk.

This means taking care about inputs (including queries), securing your infrastructure and working with supply chain partners so they do the same.

#5: Attack

LLMs are also vulnerable to attack. Data and queries stored online could be hacked, leaked or accidentally made publicly accessible.

What’s more, the NCSC explains that some attackers seek to exploit the fact that, since it’s often challenging or impossible to understand why a model is doing what its doing, such systems don’t have the same level of scrutiny as standard systems. Attacks which seek to exploit these inherent characteristics of machine learning systems re known as “adversarial machine learning” or AML.

Further, attackers can also launch injection attacks to compromise the system. As the NCSC explains, “Continual learning can be great, allowing model performance to be maintained as circumstances change. However, by incorporating data from users, you effectively allow them to change the internal logic of your system.”

If your LLM is publicly facing, considerable damage could result from such an attack – especially brand and reputational damage. To avoid this, says the NCSC, “Security must then be reassessed every time a new version of a model is produced, which in some applications could be multiple times a day.”

What can we do to minimise the risk of LLM use?

In addition to the advice included in this article, the NCSC has released new machine learning security principles to help you formulate good security and governance around your use of LLMs and other AI models.

Responsible AI use should be aligned with your corporate values and operationalised across all aspects of your organisation, recommends the Boston Consulting Group. This means embedded responsible practices in all AI governance, processes, tools and culture.

What next?

If you’d like to discuss any of the technologies or issues raised in this blog post with our team of experts, please reach out.

Call us: 0808 164 4142

Message us: https://www.grantmcgregor.co.uk/contact-us

Further reading

You can find additional thoughts on machine learning, artificial intelligence and other breakthrough technologies elsewhere on our blog:

• AI’s new role in cyber security

• The 2023 cyber threats for which you should prepare

• Five 2023 business challenges – and how tech can help you respond

• AWS, Azure or Google: Which cloud should you choose?

• What is Cloud Native? Is it something my business should be thinking about?